We all assume that a mirrorless or DSLR camera is going to pulverise an iPhone concerning image quality, but, there are degrees of pulverisation. Questions my dear reader come into play, like…

Are we talking about shooting in good light or poor light?

Are we comparing a fixed focal length lens to the equivalent on the iPhone X?

How big are we going to print?

……and so on. It’s not a straightforward comparison to make.

On the surface it seems ridiculous to even consider making this comparison, the outcome is evident before we even start….. or is it?

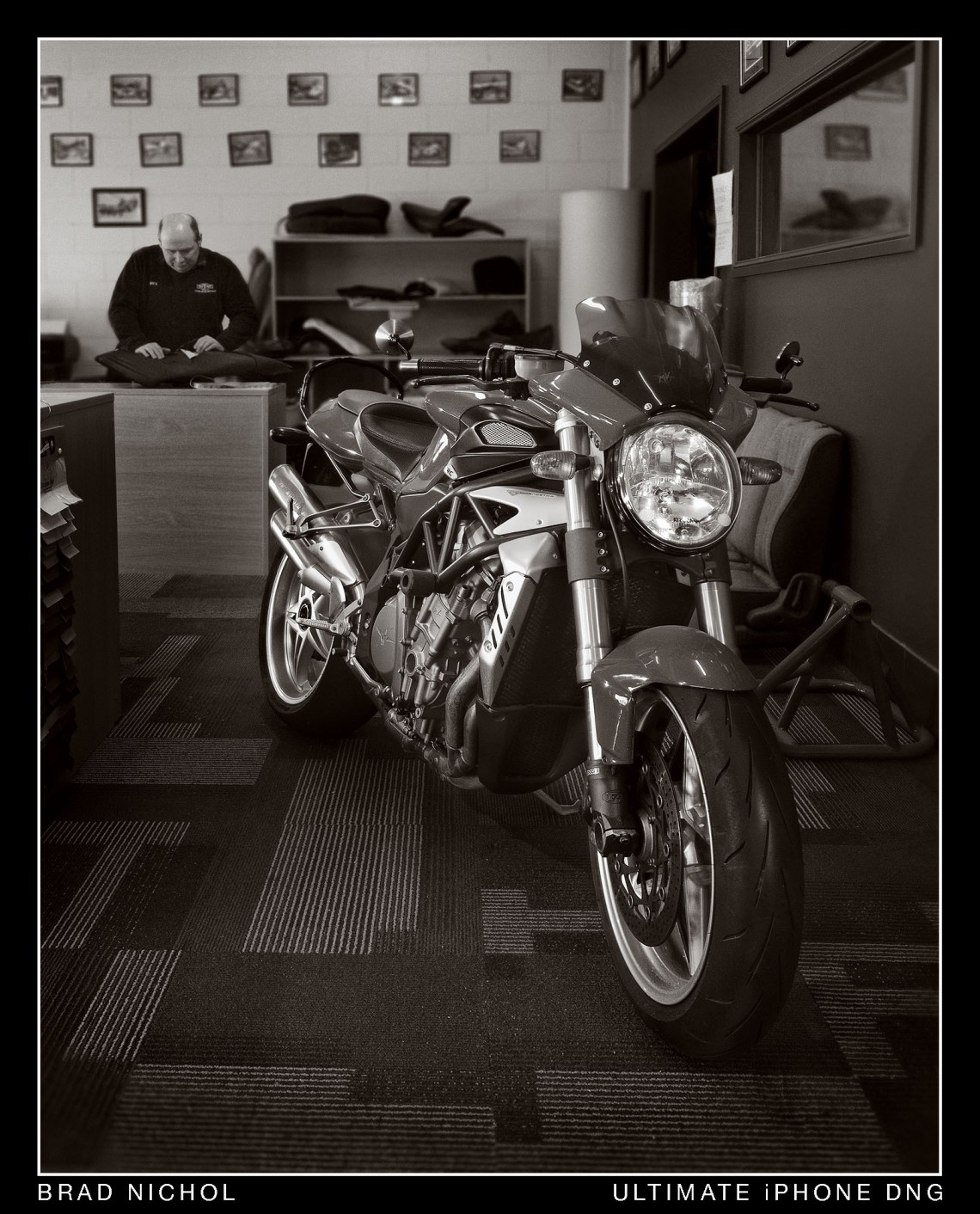

When I was testing the telephoto lens on the iPhone X and 8 models I came to a conclusion pretty quickly that these dinky little glass constructions were mighty fine from the optical perspective, so that raised my initial question. How might the iPhone in telephoto mode compare to my M4/3 camera at the same focal length?

Which then led to another question, well then what about the standard wide-angle lens….

Which begat the idea of what if I shot a mild panorama and compared that to a wide angle on the M4/3…….

Which naturally led to, yeah, but how about cropping the telephoto shot and seeing how it compares to M4/3 with a 40mm lens…….

And on it goes, so many questions, so many possibilities.

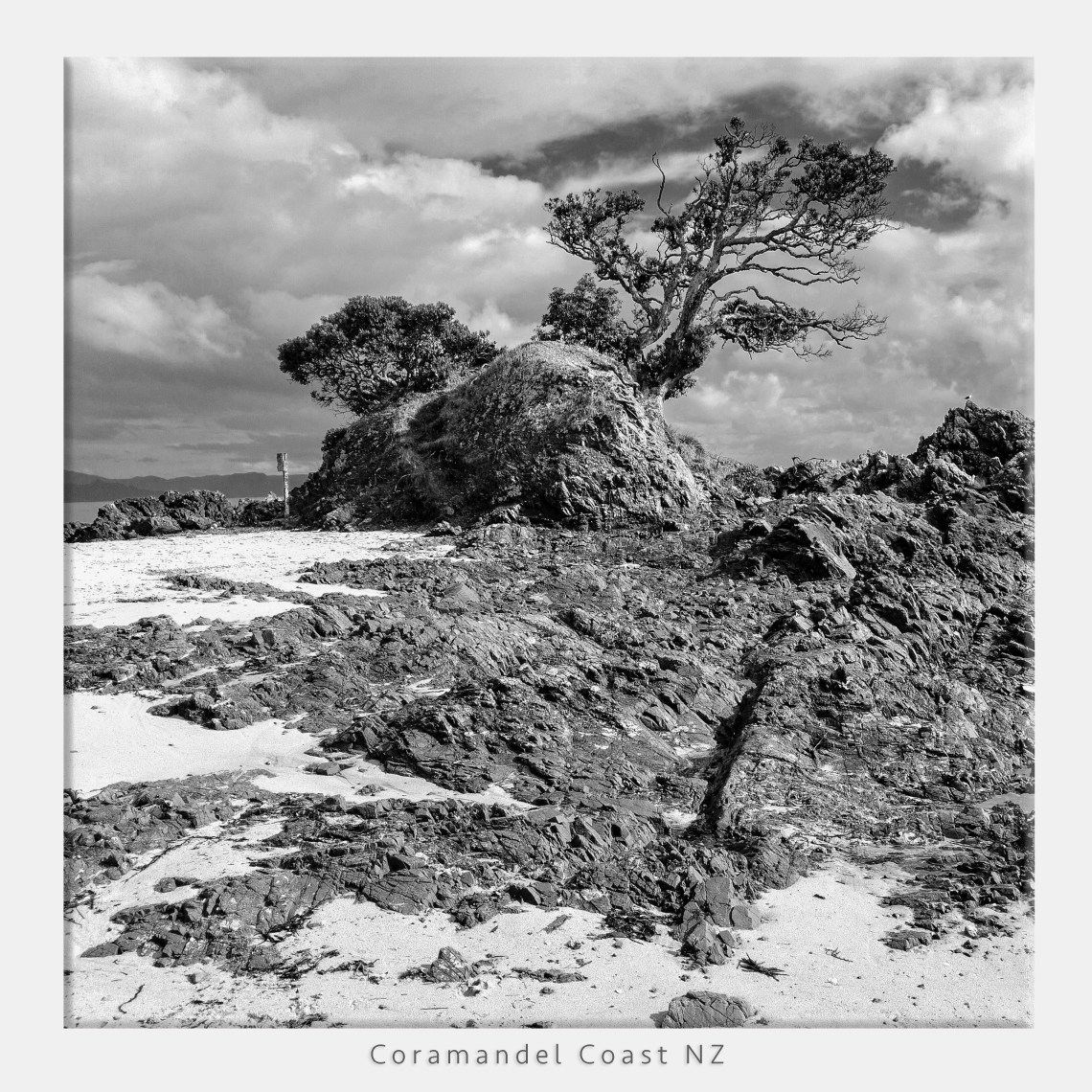

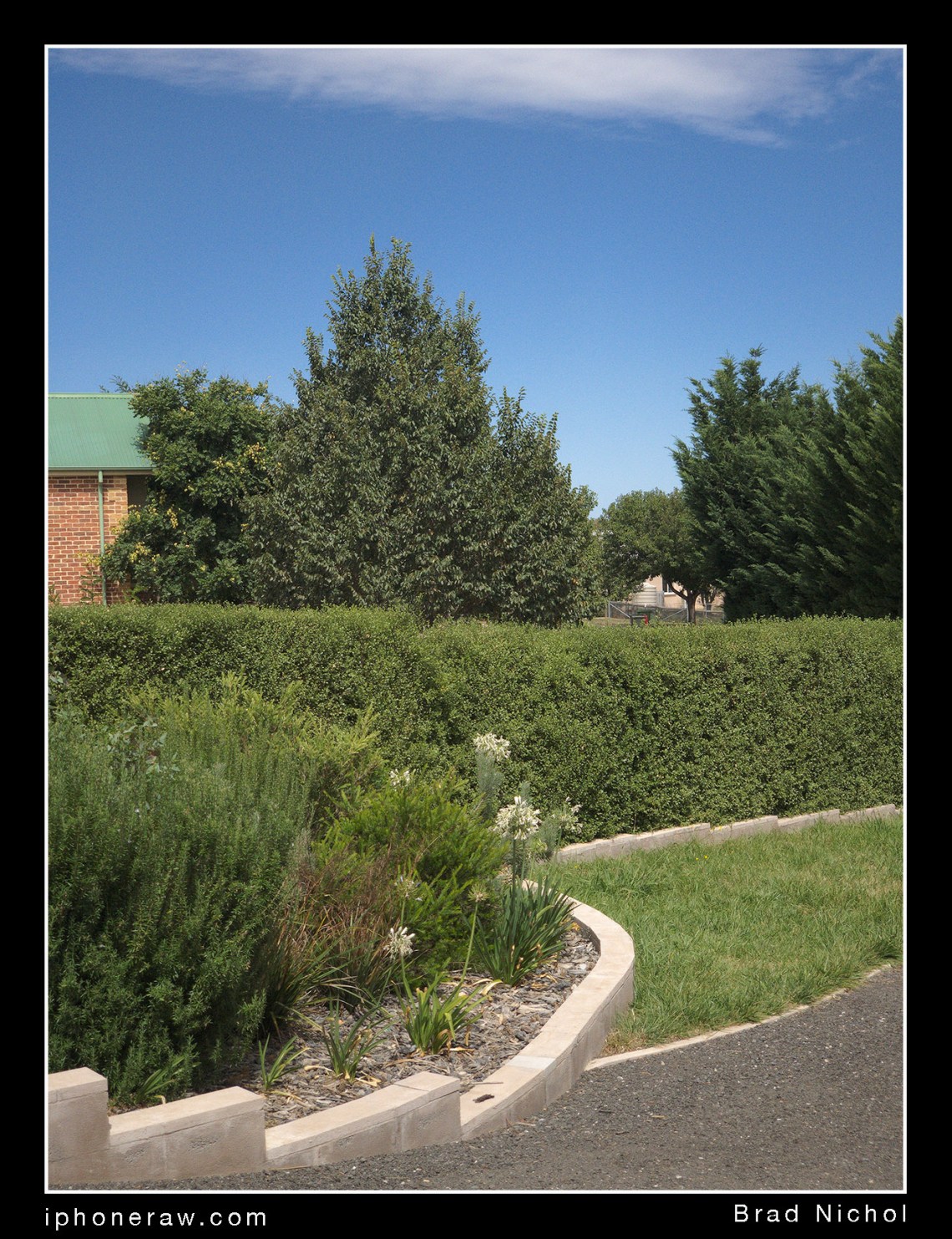

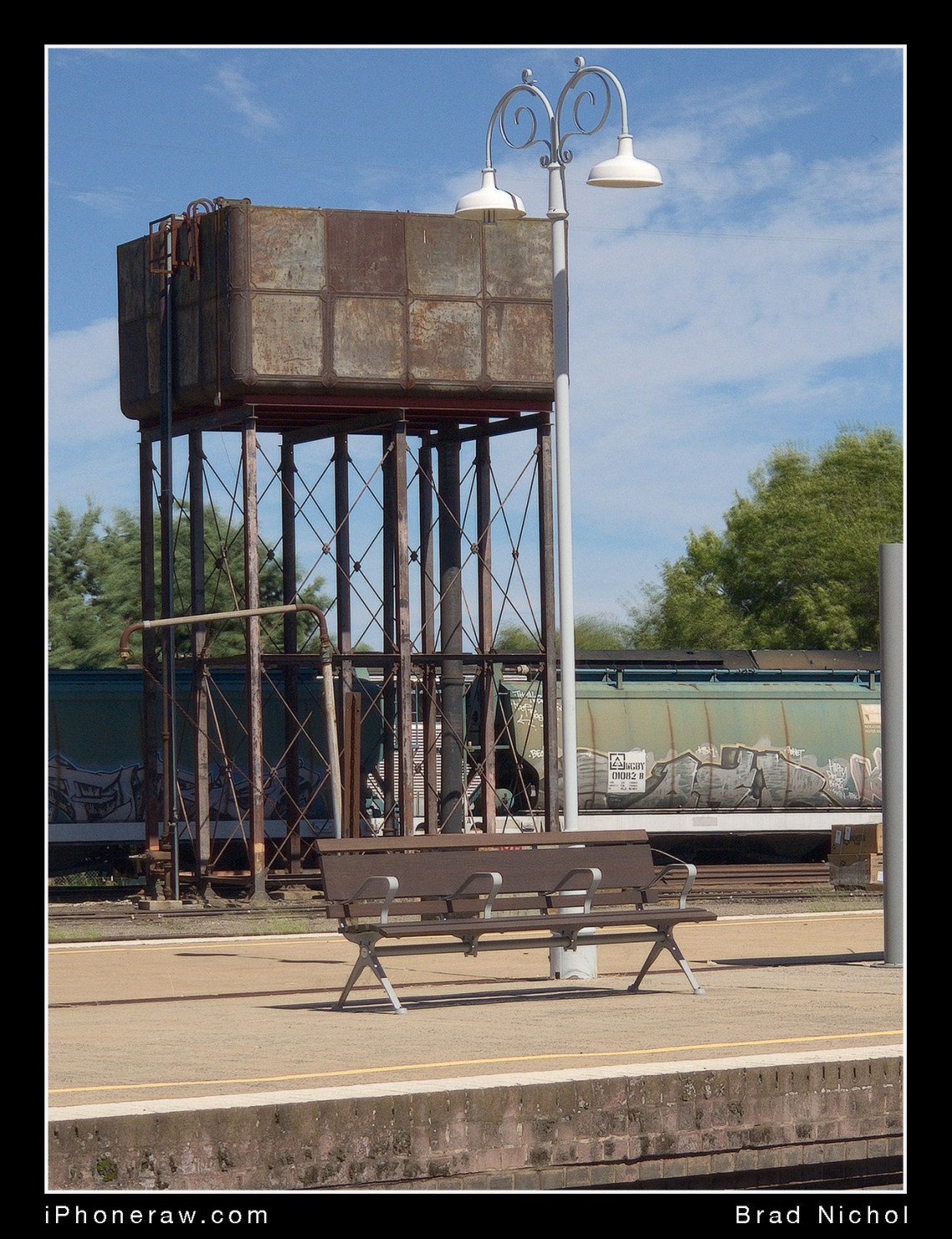

So here we are with my, “let’s shoot the same test pics at my trusty test location on both devices and see what flows from that”.

Students and iPhones

I’ve had more than a few students in my classes claiming their iPhones produced photos as good as their DSLR and Mirrorless cameras, I don’t discount their claims either, but like you, I want to see some hard evidence?

To be fair we can’t make comparisons under very low light situations (though I have, but that’s another story), that would be kind of meaningless, we can take it to the bank that a small sensor camera like the iPhone will be severely disadvantaged and underwhelming in low light. But what about in reasonable to good light, you know, the sort of light that most folks have when they take their holiday snaps etc. I never get students making grand claims about shooting starfields with their iPhones etc. All of these “iPhone boosters” are talking about regular daylight type stuff.

NOTE: I have run a series of test on the low light options including stacking, HDR etc, If you want a sneak peek at one of the single frame capture comparisons have a look on my other blog at this article:

https://braddlesphotoblurb.blogspot.com.au/2018/03/low-light-and-high-contrast-m43-and.html

Of course, my students are referring to JPEG output, I’m more interested in absolutes, so it’s DNG/RAW only here guys, but a tremendous RAW result should translate to a good JPEG result unless the camera maker is doing something completely crazy in the processing department. (We shouldn’t discount the power of all the computational imaging methods used for iPhone compressed files either, but that’s too much to deal with for one article)

Obviously, a comparison like this is not about depth of field rendering abilities, if you’re seeking shallow depth of field control, you wouldn’t be using a smartphone in the first place, so I’m not going to entertain any arguments/comments about DOF.

I chose to compare the iPhone X Tele to my Olympus EM5 MK 2, which is an M4/3 format device. Lens choice on Mr Oly was the 12-40 f2.8 zoom, it’s a well-known player regarded as a stellar performer. The Olympus was shot at the lowest 200 ISO (regular range) and the iPhone at 16/25 ISO. In other words, I tried to make it optimal for both devices.

All test have been carried out using RAW/DNG files, and the processing of those files was handled by Iridient Developer on my Mac 5K. Iridient was also used for images that needed to either upsized or downsized. Generally, I think I’ve done a reasonable job of making the comparison fair.

Aperture-wise I chose to use f5 on the Oly to give roughly the same depth of field look regardless of the focal length for both devices, the shutter speeds on each camera generally ended up being pretty similar, within 1/2 stop typically, so it makes for a fair comparison from a utility perspective.

Both devices shoot natively in a “Three to Four” aspect ratio, and I chose to compare the results for 12mm, 14mm, 17mm, 25mm and 40mm on the Olympus, these correlate to 24/28/35/50/80mm on full frame cameras. To make the comparisons some of the iPhone images have to be cropped and upsized or in the case of the 12mm equivalent a two frame panorama stitch made. The chosen focal length range represents what most people would shoot with a kit lens on a DSLR or mirrorless camera. I must emphasise however that the Olympus 12 to 40mm is a much higher quality lens than any kit lens, so the results for that side of the equation represent a best-case scenario for M4/3

It’s hard to get colours and tonal renderings identical, but overall I was more interested in the lens performance and detail. Nonetheless, I did try to get a similar white balance and colour rendering.

There’s an additional complication, the Olympus is 16 megapixels and the iPhone 12 megapixels, so I chose to test it both ways. First, upsize the iPhone files in the RAW converter to match the Olympus and second downsize the Olympus in the converter to match the iPhone. Neither approach is perfect but what else can you do? In the end, I have presented the files here at the Olys 16Mp size, which gives the Oly a bit of built-in advantage I guess.

Finally, I had to think about my target rendering, I could have gone for the web, small print etc. In the end, I felt that judging for a file that could produce an excellent 8 x 11-inch print would suit best as this would easily cover most bases for most folk.

Initial Comparison

Alright, the obvious question out of the way first!

Yes, of course, the iPhone shows a bit more noise.

Would it matter? Probably not because I normally couldn’t see the noise differences at a 50% view on my 5K Mac screen, you have to zoom into a 100-200% view to pick it easily. Even then the noise in the iPhone images was in no way objectional and purely of the luminance variety.

And here’s a fun fact for you, many Pro Printers and Editors actually add noise to the file to make the image print more organically, just the sort of noise the iPhone DNG files exhibit in fact. Trust me, noise is not necessarily the enemy!

Surprises were in store, however, first up, looking at the uncropped standard and telephoto iPhone images on my iMac it’s easy to see the cross frame sharpness of the iPhone tele and standard lens are a little more even than the equivalents on Olympus 12-40 f2.8. That’s a serious wrap, cause the near $900 Oz dollar Oly lens is very highly regarded for its consistency, especially in the mid-focal length range.

Even more impressive both iPhone lenses actually seem to natively resolve a little more fine detail. I’m very confident that if you were sitting next to me looking at the image pairs on screen at 100% views, you’d pick the iPhone shots as slightly more detailed and sharper (though a little bit noisier). I realise that sounds heretical, but I stand by those words 100%.

But here’s the clincher, the uncropped iPhone shots are often sharper when both up-rezzed to the Oly’s 16 mp or the Oly is down-rezzed to the iPhone’s 12 mp, I imagine there are quite a few folk who did not want to hear that, I was one of them.

Now I can hear a whole bunch of Sony, Nikon and Canon fans out there saying so what, APSC is bigger than M4/3 and it will eat your puny iPhone Tele and Oly for lunch and then take your afternoon tea as well. Mmmm, I wouldn’t be too sure about that, I didn’t test that, but I have tested enough of all of those brands and their kit lenses to make a couple of comments that just might have some bearing on this comparison.

Yes the APSC models will have lower noise, but in the good light like this at low ISOs, the difference between APSC and the M4/3 sensor is small, small enough to be a non-issue. More importantly, the lenses for APS-C are very rarely as good as the 12-40mm Oly is, if you were comparing to any Nikon, Sony, Canon kit lens then forget it, I’ve tested several, they’re not even close, you’d have to be talking about a Pro-Grade lens.

I’d suggest in the washup the central core of the APSC images will be comparable maybe a tad better, but the outer edges and corners will be worse than the iPhone X lenses. But I’m happy to be proved wrong if someone wants to do some rigorous testing (using correctly processed raw files of course).

APSC cameras also have a different aspect ratio, so in an “apples to apples” comparison, you need an 18 mp APSC to directly compare to the 16mp M4/3 regarding matching the vertical dimension. Frankly, there’s very little difference between the 20 and 18mp sensors in performance or resolution so I expect it would it only be with the 24mp sensors that an APS-C camera may gain some resolution ground and only if the lenses were really up to snuff.

So if the iPhone lenses are possibly sharper, what about the dynamic range, vignetting, chromatic aberration and all that other stuff everyone pixel peeps and gets excited about?

Chromatic Aberration.

The Olympus 12-40mm f2.8 is an excellent performer for CA, it shows very little if any at most focal lengths and is easily corrected, in short, its vastly better than most kit lenses, many of which are dismal CA performers only made good by extensive software correction in the processing stage.

But, take a look at the samples frames made from the left side of the 14mm shot, these are uncorrected RAWs, if you compare the Oly and the iPhone 4mm X lens it’s clear who is eating whose lunch as far as CA goes.

The iPhone X lenses are as close to CA free in the native state as I’ve ever seen, you have to zoom in too 200% or so to see any CA at all, correcting it is not really required at all.

I know photographers gloss over CA performance, saying it’s easily fixed in software, but you’ll always get better results from a lens that requires no correction. The CA fix softens the edge and corner detail a little, anyhow the iPhone X lenses look to be gems of modern lens design in this regard.

These two crops also show that if you factor the iPhones higher noise out of the equation, it displays slightly more detail on the edges of the frame than the Oly does at 14mm in the native RAW state.

Vignetting/Distortion

To see the vignetting in pics from either device you have to turn off the Raw Convertors built-in profile, resulting in the somewhat rubbish looking images below which reveal the unvarnished truth. For this test, the Oly is set at 14mm and the iPhone X on the 4mm standard lens.

Those two pics are straight extractions with everything zeroed out except for a little brightness/gamma boosting to make things a bit clearer for you all to see.

Neither of the devices shows any issues with vignetting at 25mm on the Oly or with the iPhones’ X 6 mm Tele, so I have not included samples here.

It’s an interesting exercise which reveals a couple of significant points. First, the Olympus lens is very even in the scheme of things, most kit lenses show vastly more vignetting than this in their native state. On the other hand, the iPhone X 4 mm lens is not exactly a weak performer either, sure there’s vignetting, but it’s only in the sky areas that this is really obvious and regardless, it’s well within the easily fixable range.

Next, we can see that the Olympus lens has a much broader angle of view when the lens profile is disabled, this is normal by the way, it allows for software correction of distortion, and folks, we do have some reasonably noticeable barrel distortion here.

The iPhone X 4mm has virtually zero distortion, I tried flicking the profile on and off and couldn’t really see any difference, and I can’t see any noticeable curvature of straight lines in this sample. That’s great news because it means the edges and corners of the image don’t get degraded by the correction process, all of which probably explains why I found the processed 14mm shots from the Oly were not quite as well resolved as the iPhone X 4mm along the edges and into the extreme corners.

Dynamic Range

Well, oddly there’s very little difference between them, at least for the shots I took on both cloudy and sunny days, (note: cloudy days are more demanding on the dynamic range than you might think when white cloudy skies are included in the frame). All of the files have similar levels of tonal recoverability, you just get a little more shadow noise with the iPhone shots.

The unprocessed images above show basically the same RBG numbers in both the highlights and deep shadows, though obviously the mid-tone tonality and saturation are far punchier on the native Oly files.

Importantly your editing choices will have an enormous effect on the results so your mileage may vary depending on your skills and software, but either device will render files that are about equally malleable.

I’ve no doubt any full frame camera would eat both alive and spit out the bones, but many APS-C cameras, especially older sensor Canons will be little if any better in this area.

Ultimately I think the limit for both of the X lenses is the noise on the sensor, there’s undoubtedly no optical issues to be concerned about, and the native dynamic range is really not too bad at all.

What about Crops?

17mm Equivalent.

This test surprised me, the iPhone X didn’t win, that would be crazy, but it was nothing like a walkover either. Consider that to get the equivalent of the 17mm lens you have to crop the iPhone down to just 8 megapixels and then blow it up to equal the Olympus EM5 Mk2s 16 megapixels!

The path I took was slightly more circuitous, I cropped the file in the RAW state and then upscaled it 200% on export, tweaked it in Photoshop CC and then resized it to match the Olys 16Mp. But my friends, facts are facts, if the pixels and detail aren’t good to start with no process will make any difference, you’ll just end up with more mush than you started with.

At a 100% view, the Oly looks a little more resolved, hell it would be the digital equivalent of walking on water if it didn’t, but here’s the thing, at a 50% view or for an 8 x 11-inch print size the images look almost identical regarding detail. Differences? The Oly shows less luminance noise in the blue sky and very dark greys, and the tonality on the iPhone shots looks more filmic and to my eyes nicer (Yes I could probably get a perfect match, but oddly the Oly files just don’t seem quite as flexible).

There would be another way to skin this digital cat, you could shoot a two frame stitch with the Telephoto lens on the iPhone, I’m pretty sure judging from extensive tests I have made with that lens, the iPhone would then wipe the smile right off Mr Olys face.

Honestly, I reckon most people could shoot the iPhone X wide angle in DNG and crop the image to 17mm equivalent (or 35mm for you FF shooters) and get a result that is absolutely fine for 95% of non-pro needs.

80mm Equivalent.

Whoa there, now we are really stretching the bounds of credibility because to pull this trick off you are turning just 5.2 megapixels of iPhone X tele into 16 megapixels. Doesn’t sound like much of a contest, does it?

So you have two options here to get your 80mm full-frame equivalent, upsize the RAW file after cropping before export and make the most of a single frame or take a multi-frame capture and then stack and blend them in Photoshop. I tried both.

Now neither of the above approaches gave a result that looked close to the Olys 40mm shot at a 100% view but I was surprised that a 50% view seemed about the same in detail and I kind of preferred the cropped non-stacked version of the X file. This held true for the 8 x 11-inch test print crop as well.

The stacked version comes tantalisingly close for detail, but it just looks a little more forced and digital at a 100% view.

In the end, I’d have no qualms at all in cropping the iPhone X frames to the equivalent of 30-35mm, I feel the 40mm crop is excellent for web stuff and moderate size prints, but if I need to blow the image up, I’d much rather start with the Oly.

I imagine that if you saw any of these versions in isolation, you’d be perfectly fine with the print or screen image, so from a practical point of view, yes, you could shoot your iPhone X RAW in the tele mode and severely crop for that longer tele framing.

Say what about 100mm?

Got to be kidding, right?

You’re surely not going to get a great result with a crop like this from a single frame, but I did try an 8 frame stack which involved cropping the raws, exporting at 16 megapixels then doing a blend of the 8 frames in Photoshop. This is not as radical as it might seem, there are several iPhone Apps that do something very similar on the iPhone in either JPEG or TIFF.

So what did that taste like?

Well not perfect by any stretch but the result would be excellent for a 5 by 7-inch print or for web use, with the right stacking/blending method the result is likely better than you might expect.

And the Panorama Option?

I’ve got all sorts of lenses in several formats, but the one thing I don’t have is an ultra wide M4/3 jobbie, 12mm is as wide as I can go, which by the way is usually just fine. Sometimes I want more, and I’ve become pretty adept at making holiday panoramas that cover the 8 to 12mm range with my M4/3 gear using stitch options.

Simulating the equivalent of 12mm with the iPhone is simple enough, just shoot two frames in the vertical orientation with about 20% overlap and stitch them in your favourite editing application. I prefer Photoshop CC, but many other apps will also do a great job.

The more obvious alternative is to use the Panorama mode on your iPhone via the standard App, again this is super easy, but the results are nowhere near as good as those you can achieve via the DNG/RAW file image stitch pathway. The Attached pics make the differences easy to pick.

First, the iPhone Panorama mode produces nicely sharp results, surprisingly sharp in fact, but there are a few deficits compared to the two other options. First, you get an image which doesn’t have straight lines, and while it’s possible to correct this in editing, it’s by no means easy to do well.

Second, even if you’re very careful and keep everything entirely on the level, you’ll still get small stitching errors which ruin the result, though you probably won’t see these in small prints and on-screen views.

The last issue with the panorama mode relates to the rendering of highlight tones and colours. Highlights are often severely bleached/clipped and colours sometimes a bit over-cooked. You can control the exposure, but it’s usually impossible to get an exposure level that gives an optimal result for the entire image, especially skies.

Ultimately you could use the Panorama mode as an alternative in many situations, provided you wanted a quick and dirty result, but post editing an in-iPhone panorama to get a top-drawer final result is likely far more work than the other two RAW options, and remember the panorama mode is a JPEG once exported.

The Olympus 12-40mm f2.8 is really stellar at the 12mm setting, you’d have to be super picky to find fault, and honestly, for an overall result it wins the contest, but it’s very close. In the centre of the image, there’s nothing to pick between iPhone two-frame stitch and the Oly @ 12mm for clarity. It’s only on the edges of the frame where the necessary panorama transformations and slightly higher iPhone noise level conspire to allow the Oly to win the contest, but again you’d need to be pixel peeping at a 100% view to see it. I seriously doubt you’d notice any differences in clarity between them in an 11 by 8-inch print.

Tonally for this, I preferred the look I got from the iPhone DNGs, the highlights look nicer though the shadows are an even contest, meaning, the dynamic range seems to be about the same, heretical I know but I stand by that statement.

In the end, the only downsides in shooting the wide shots with the iPhone X are that it doesn’t suit moving objects, you lose the instant “in-camera” result, and there’s a little extra work in the editing phase. On the other hand, you probably have the iPhone on you anyway, the results give next to nothing away in detail and actually the process is more flexible as you can easily go much broader if you want from the same two captured frames. Personally, I wouldn’t hesitate to go down this path when I need something wider than my M4/3 gear offers or don’t have the Oly with me.

What to Choose and Why?

So there it is, both lenses on the iPhone X are terrific and offer excellent imaging potential for DNG shooters and the iPhone 8 Plus is very similar. That the X competes favourably with my M4/3 gear in reasonable to good light is quite extraordinary.

My iPhone X won’t replace my M4/3 gear, I didn’t expect it would, to do that, low light performance would need to improve by another couple of steps. However, the potential shooting envelope is much wider than with previous iPhone models and the gap between the two formats in “reasonable to good” light is very much closer than I expected and probably closer than you expected also.

And let’s not forget, you’ve been looking at RAWs from both devices processed in a state of the art RAW converter known to extract more detail than most converters. JPEGs from either camera are far less well resolved than the samples shown here and most folks are just fine with the JPEGs, that said, the iPhone gains far more from the RAW/DNG option than the Olympus does.

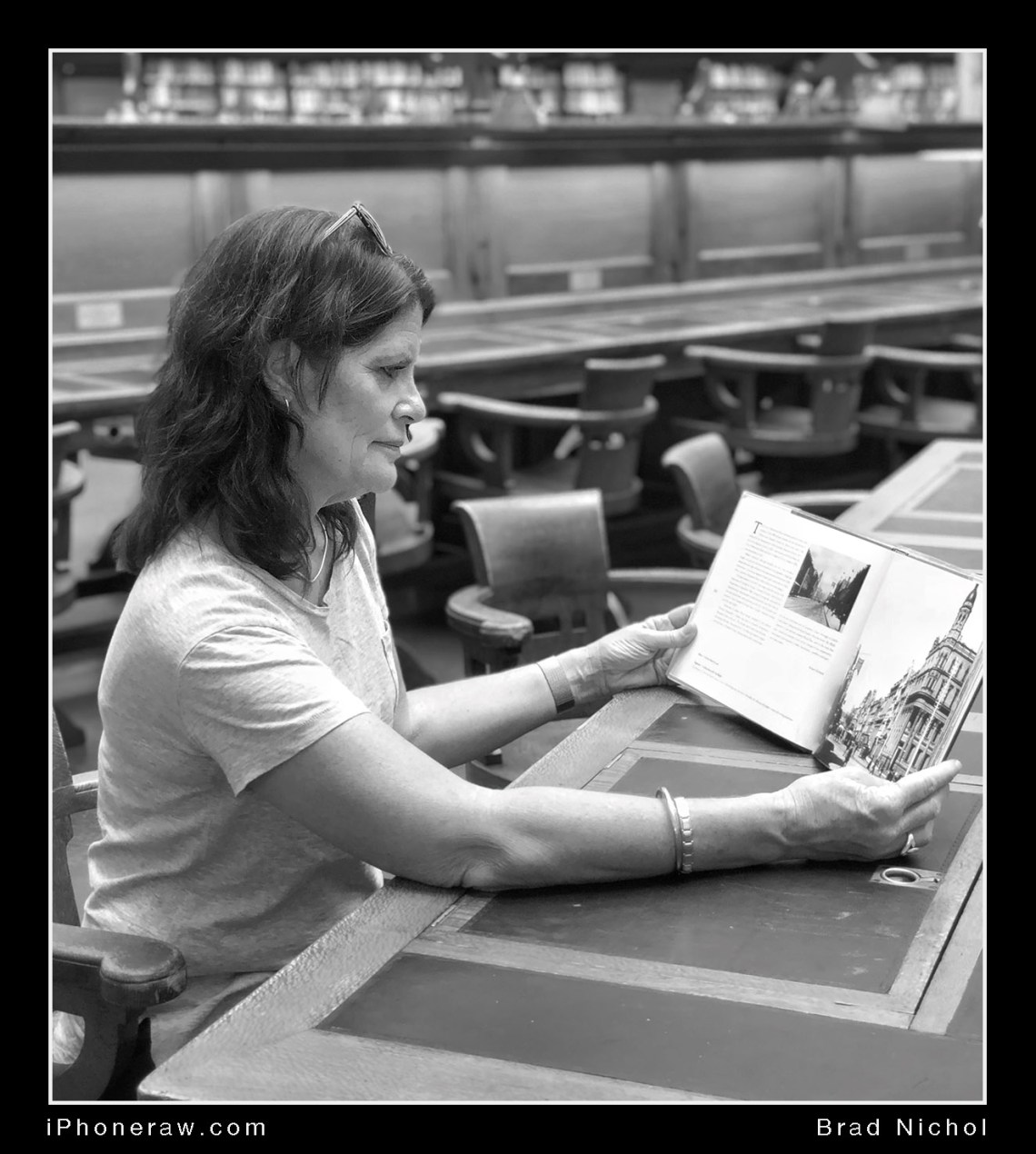

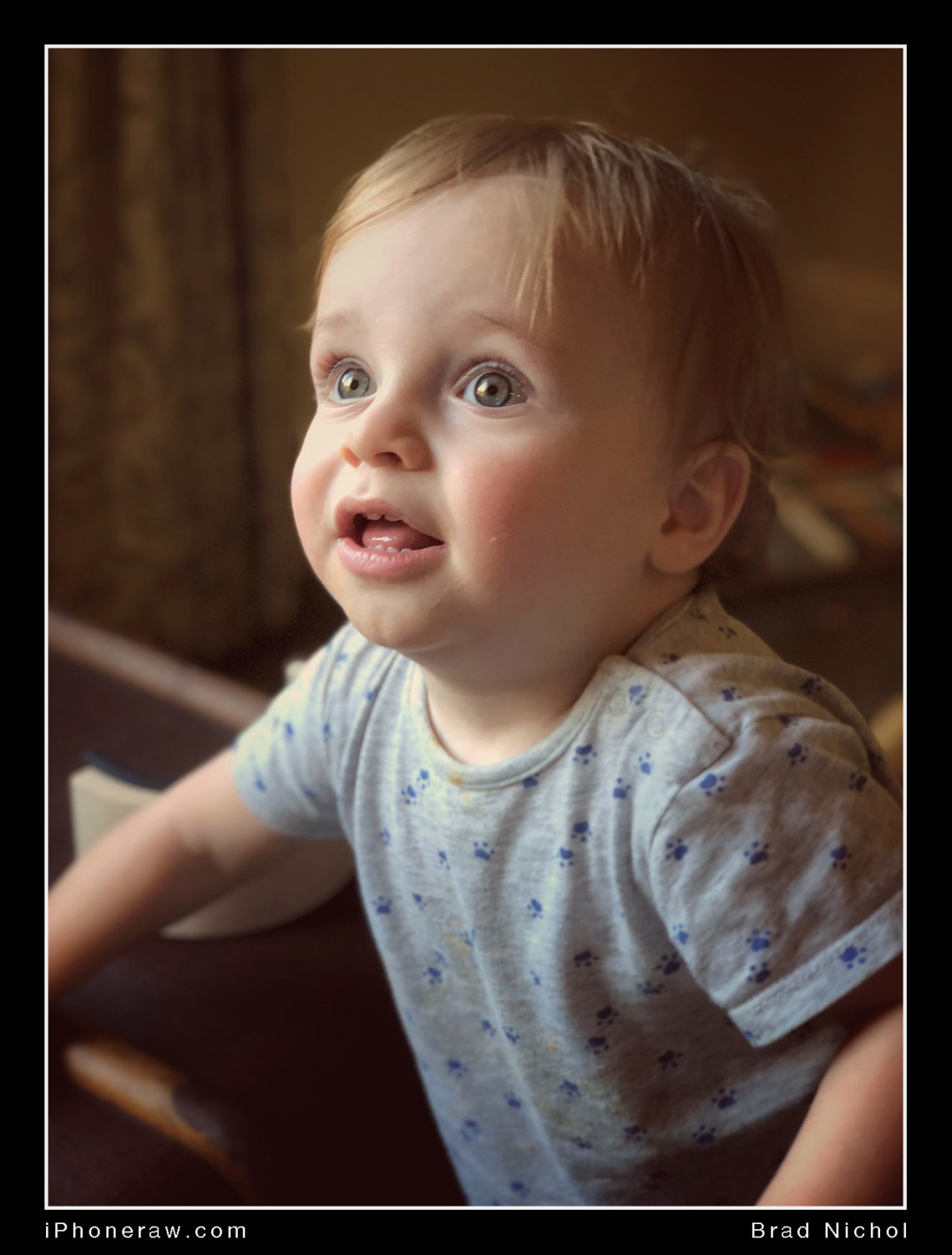

It’s the addition of tele lens that makes all the difference for the iPhone, I couldn’t consider the single lens models as a complete potential “personal camera” replacement. Hence I always needed to have my regular camera with me at all times while on Holidays and at family events, the tele lens bridges an enormous gap and the improved image quality of the new iPhone models in RAW/DNG strengthen that bridge.

The most significant impediment for serious shooters would be the lack of depth of field control with the iPhone rather than image quality deficiencies. Truth be told the majority of photos most folk shoot are not dependent shallow depth of field to work and ultimately computational imaging methods may well render this a non-issue within just a few years, the portrait mode on the iPhone and other smartphones is indeed promising.

Ultimately with RAW/DNG files, it’s the potential of the file that matters, if the file is malleable, well resolved, contains all the data you need to push and prod the tones into shape, the results can be excellent. A combination of excellent optics and remarkable sensor performance on the iPhone X and 8 provide a great starting point, if you don’t get good or even great results you’ve either fluffed the capture or haven’t yet nailed the processing end of things.

For now my M4/3 wins, sort of, but in another couple of years, the result could well be a tie or even a loss. In the interim, I’ve no worries about using my iPhone X for a wide array of Photographic tasks in full confidence that quality wise it will deliver.