Most casual iPhone followers think the telephoto lens in the X and 8 series are the same, they’re not, the aperture is wider on the X 2.4 versus 2.8 and the lens/sensor module has image stabilisation on the X version. Those differences alone are important and should make for better images under many situations, especially if the light level is low, note however that when shooting with the standard camera app the iPhone 8 and X will both revert to a cropped version of the image from the standard lens when the light level really drops off a cliff.

I had several questions I wanted to be answered regarding the Telephoto lenses and options on the iPhone 8 and X models.

Regarding the comparison between the two models I want to know:

Are the lenses optically identical?

Is there any difference in dynamic range between the two lens/sensor modules?

How does the native “depth of field” rendering compare?

Moving beyond those basics, I also want to know:

How well resolved are the corners?

Does either lens show significant native vignetting?

Are there any issues with Chromatic Aberration?

How good or poor is the image noise?

Eventually, I will answer all of these questions in detail but for today lets look at the portrait mode.

In a more general sense, the Portrait mode is fascinating and I thought it would be good to initially look a little deeper into this option. I’ve seen a few comparisons online between the iPhone and Android versions of the concept and though no smartphone cameras have come close to perfectly simulating the shallow “depth of field” you get with your DSLR or Mirrorless camera, the results for most casual needs are more than passable.

I must add that at this point we are looking at first and second generation products, I’ve no doubt the next five years will see enormous gains in the final quality provided by portrait mode options. Your DSLR is certainly not yet a dead end but it will increasingly become a threatened species.

Just so we are clear, some of my questions relate to the compressed HEIF or JPEG files produced by the regular camera apps, specifically the portrait mode option but I also wanted to uncover the unvarnished truth and determine “the true potential” of the iPhone X Telephoto lens when used optimally, for that we must look to the DNG/RAW files.

This is going to be an in-depth examination so I’ve decided to break the coverage into three parts.

Part 1 Using the portrait mode

Part 2 Shooting DNG Telephoto on the iPhone X

Part 3 Comparison of the iPhone 8 and X telephoto lenses

Let’s get started then…

Is the Tele Lens any good?

Cutting to the chase first, for those who have not used an iPhone with a Tele lens option, the simple answer is yes, it’s a terrific option and I couldn’t imagine going back to an iPhone without one. The lens quality is solid and produces sharp results while the focal length helps me at least, to create photos in situations where I would otherwise have chosen to use a regular camera.

Some testers have pointed out that you’re limited by the lens in low light situations because the aperture is smaller (f2.4) than the wide angle lens (f1.8), true, but it’s nothing like a deal breaker for most needs.

I far prefer the natural perspective the tele lens provides and in particular I value the ability for more selective composition by virtue of avoiding the background distractions which tend to more easily encroach when I shoot with the standard wide angle lens.

Technically the extra lens is not one you’d normally classify as a telephoto, coming in at around 56mm full-frame equivalent. A 70mm equivalent lens would have suited me better but strict physical limits apply when your camera is only a few mm thick. On the other hand, the 56mm equivalent focal length is long enough to suit a great many needs, there’s a whole bunch of reasons why the 50mm focal length became the most used choice for film photographers…it basically works better more often.

Just in case you are wondering if the Tele lens might give a better result that one of those add-on lenses you’ve seen on eBay or even one of the more expensive ones, trust me, there’s no comparison, the iPhone Tele eats them alive, especially in the corners of the frame. Not one of the add-on tele lenses I have tried offered anything like sharp corner resolution, maybe there is a perfect option floating around out there but I very much doubt it. By the time you pay for a good quality one plus the mount/case needed to adapt it to your iPhone your getting much closer to the price of the iPhone 8 dual lens model, and let’s not even bother getting into the practical aspect of a chunky bit of glass added to your slip in the pocket device.

The Portrait Mode

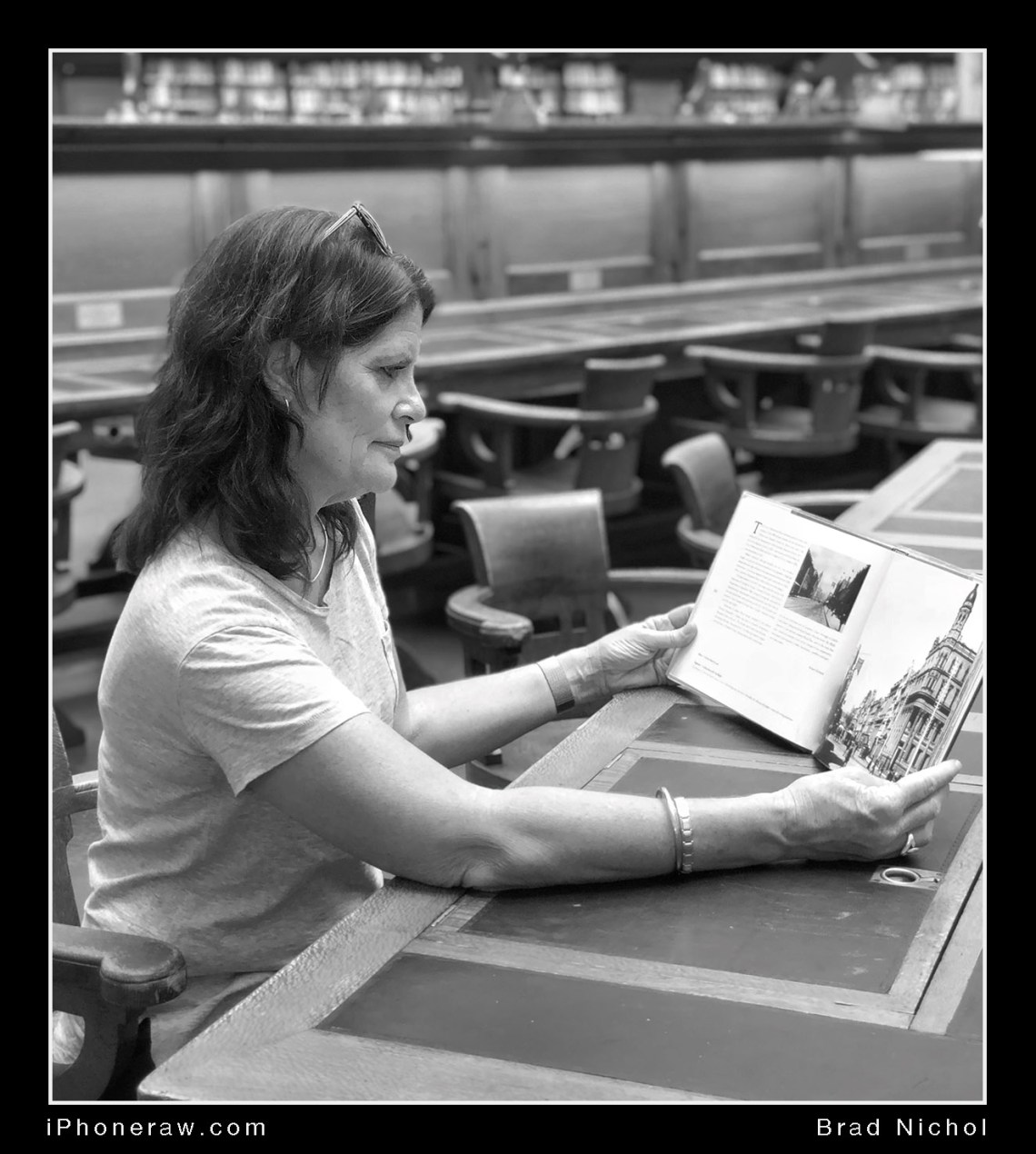

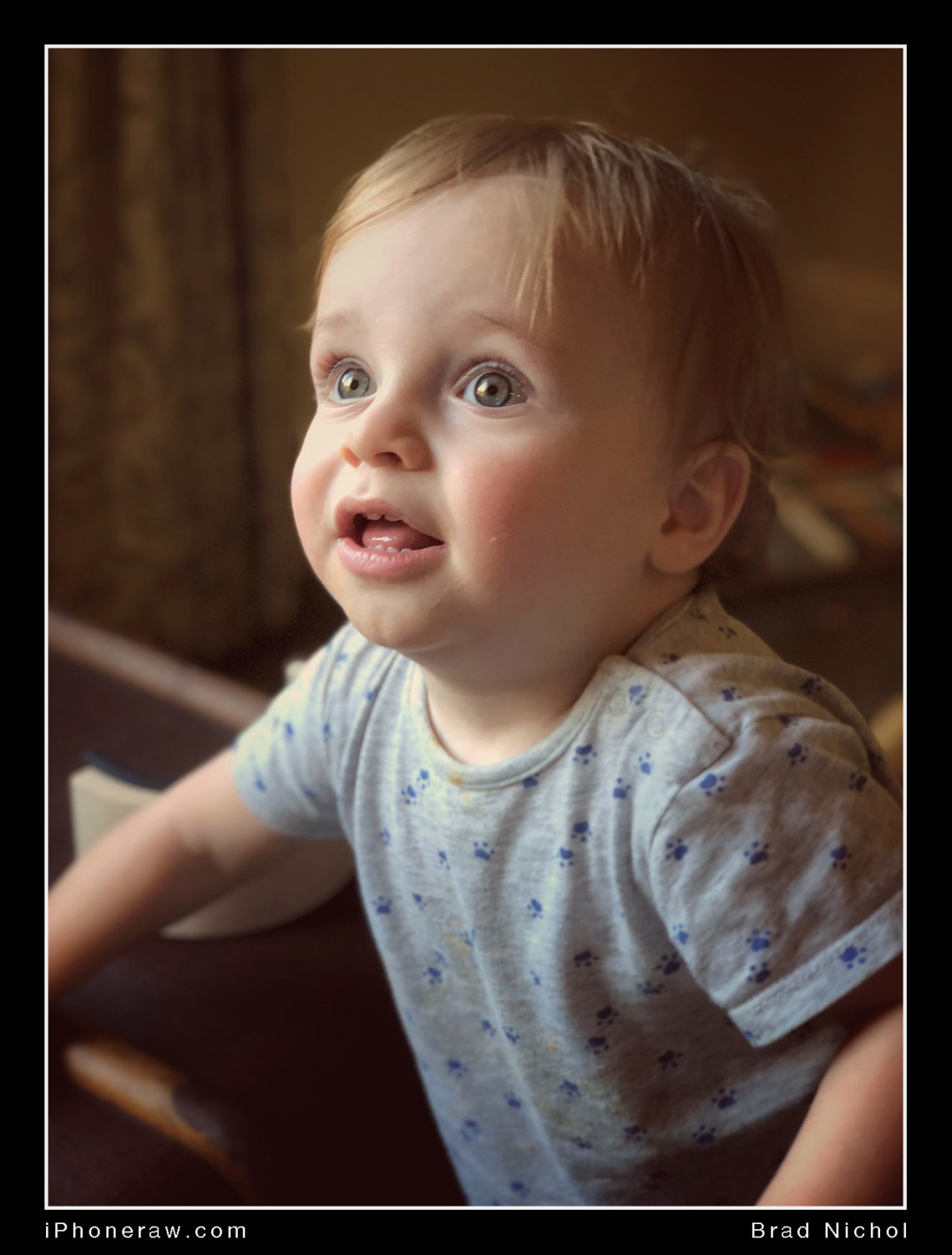

My wife Wendy is a great fan of portrait mode, she feels it gives her noticeably nicer results when taking family pics and especially so when she takes photos of our Grandson, Milton. I’ve included some of her pics in this article as examples.

Wendy is not what you would call a picky photographer, she just loves to take photos of the things in her life that matter or need to be recorded, just like most iPhone camera users. I could, of course, point out to her the technical deficiencies of the mode and she’d just as likely say, “so what”, she’s just a happy iPhone camper.

Here’s the point, most consumers won’t care about the techy stuff that most hobbyists and serious photographers fret over, they just want nice looking portraits with a minimum of fuss, for them, portrait mode using the standard iPhone camera app delivers and for those of us who do sweat the small stuff, well the tech is only going to improve and we have other options if we want to use them.

On the other hand, I’m sure when the mode is further refined and the results improved most users would notice the benefits but in the interim, most owners have no deal-breaking issues with portrait mode.

Me? I find it OK, really I don’t expect perfection, I am just grateful that it works at all.

But the articles on this site are for those who want to take things to a higher level so critique the portrait mode I must and along the way offer some advice to help you get more out of it.

How does it work?

First up, let’s differentiate between the two parts involved in the option. The basic function is to simulate the shallow depth of field effect you’d expect if you’d shot using a DSLR or Mirrorless camera with a telephoto lens and wide aperture.

The second part is to apply lighting effects to give the portrait a look that channels studio lighting etc. Currently, there are 5 options, natural, studio, contour, stage and stage light mono, no doubt Apple or other third-party app developers will add to this set as time goes by.

At this point I’d say the mode is much better at the former aspect, the lighting effects are very patchy in practice, sometimes the result is great, but often it’s just awful and even casual users, my wife included, have noticed this.

There’s some confusion here because most people it seems have assumed that portrait mode refers to the combination of lighting and depth of field effects, not true they are separate, the depth of field effect can be turned off “in post” and the lighting effects still applied. To do this you simply hit the edit option on the top of the screen in the photos app and then tap on the yellow “portrait” tab, the DOF effect is removed but the Lighting effect option is still active.

Remember that none of this is destructive, the original image is still intact, we are just applying an instruction set to the image so it looks like the image has been adjusted when we view it….in other words, no pixel is harmed in the process! What is critical however is that the file is shot using the HEIF format because that’s the only way we can save the depth map needed to create the shallow DOF simulation.

You can read about the HEIF format here:

https://www.cultofmac.com/487808/heif-vs-jpeg-image-files/

Ok, all good info but how does it work? There are two lenses on the iPhone 7/8+ and X models when you shoot in portrait mode both of these are used. The telephoto is used for the image and a combination of the standard wide angle and tele lens is used to create a depth map of the image. Say what! Depth Map!

Yes, that needs a little more explanation.

So we have two eyes, this allows us to gain a great perception of depth when viewing our world because each eye sees a slightly different view, which means we can sort of see around things. The upshot of it all is that our very clever brain is able to take these two images and blend them together so we see in 3D.

Our sense of 3D is excellent for close up objects but as the distance increases the effect disappears, this is easy to, well, see. Close one eye and look at a scene, straight away you have lost the sense of 3D, open them both and we have 3D, that’s obvious, isn’t it.

Now, look at a distant object and close one eye, it looks pretty much identical because both eyes see it almost from almost exactly the same viewpoint. Now look at something about a metre away and do the same, the difference between what each eye sees is quite profound, it’s simple really, the closer the object the more pronounced the difference is between the view seen by each eye.

With me so far, good. Now it should be obvious that the view seen by each eye will not line up if you layered them over the top of one another, closer objects would show more misalignment than mid-range objects and distant objects would show virtually no misalignment at all.

Now if we measured the misalignment differences we would be able to work out how far from the camera the scene objects are. This is the principle behind rangefinder camera focus systems so there is nothing new under the sun here.

Here’s the cool bit, if we can work out the distance of those objects we could create a map of the scene which can be overlayed on the image and used to control or mask a blur filter. In other words near objects don’t get blurred and more distant objects or scene elements do.

It all sounds simple enough but in practice it’s very complicated because a whole bunch of parameters have to be determined, like at what distances does the blur stop and start, how much do you blur the image, how can you blur without cutting into fine details like hair, leaves, edges of fabric etc, it’s enough to make a tech-heads cranium spin.

Creating the depth map is the simple part, the tricky part is the post-capture processing and I have no doubt Apple will significantly improve this given time.

Currently, there is a fair degree of AI used in the processing of the images in Portrait mode and I expect that Apple will increase this over time, this will make a significant difference, the potential is well demonstrated by the Google Pixel 2XL. The Pixel has only has one lens and relies to a much greater degree on AI for processing, it produces great results, sometimes better in fact than the tele portrait option on the iPhone.

At the capture level, the iPhone is hamstrung somewhat by the distance between the two lenses, it’s not much more than a centimetre, which limits the accuracy of the depth map. Basically, the further apart the two capture lenses the more accurately object distances can be measured, now you know why the wide gap between the two viewing windows on a Leica rangefinder is so important, it allows for very precise focus setting, which is especially important when using wide aperture lenses.

A second complication is that both lenses are not identical, so I presume the image from the wide angle has to be cropped internally to build the depth map but this would mean the system is working with a lower resolution image, but bear in mind Apple don’t exactly spell all this out, so who really knows?

If Apple were to increase the distance between the two lenses the accuracy of the depth map would be better and combined with smart processing would probably mean the system would be less prone to blurring fine details and other errors but I doubt that Apple will venture down this path in future, it would compromise the practicalities of the iPhone too much.

There are other ways of building depth maps, take a lot of photos at different distances in very quick succession and then selectively blend the images together using depth information. Use infrared beams to map the scene and create a depth map from that information, which is exactly what the iPhone X does with the front-facing camera. Use artificial intelligence to analyse scene elements and make depth judgments based on comparing the relative size of those elements and machine learning. The latter option is the method used by the Google Pixel 2 XL which also uses information gained from the phase detection focus pixels.

Beyond that, we have sonar, which was used to determine focus with the old but lovely Polaroid SX70. It should also be possible to create a very accurate depth map by taking the actual photo then moving the camera left and right to capture additional frames which could be used for the map but not the photo, there have been examples of cameras doing a version of this too.

Basically, there are many ways to skin this digital cat but for smartphones, practicality is almost always an over-riding issue, in the end, it is likely to be a combination of methods that wins the day.

I suspect the next high-end iPhone will use the iPhone X front camera method for portrait mode on the rear camera to build a more accurate depth map, I’m sure Apple didn’t go to all that trouble on the front facing camera just so we could unlock our phone and take better selfies! I also suspect that long-term the iPhones portrait mode will also use much more machine learning and probably more sophisticated multi-shot capture methods. Remember, all of these options currently exist in some form or other, we’re not talking about inventing the new wheel but rather combining current technologies in more sophisticated ways.

The main current limitation of the built-in portrait mode is that it only works in a range of around one to two and a half meters due to the small distance between the two lenses and you have no control over the blur effect. There are other third-party apps which now use the depth map to create blur effects so watch this space because I’ve no doubt that even with the current hardware the results could be improved by better processing algorithms and perhaps even capture options.

The new APIs (Application programming interfaces) that Apple opened up with iOS 11 has meant that developers are free to create new apps to work with the portrait mode and depth maps, If you want to try the better current alternatives, which by the way allow you to vary and control the DOF effect, check out these options:

Focos

Slor

Anamorphic

infltr

These apps really need their own articles, there’s some serious power on offer, but for today I’ll stick with the standard option as much as possible.

Is “Portrait Mode” just for portraits?

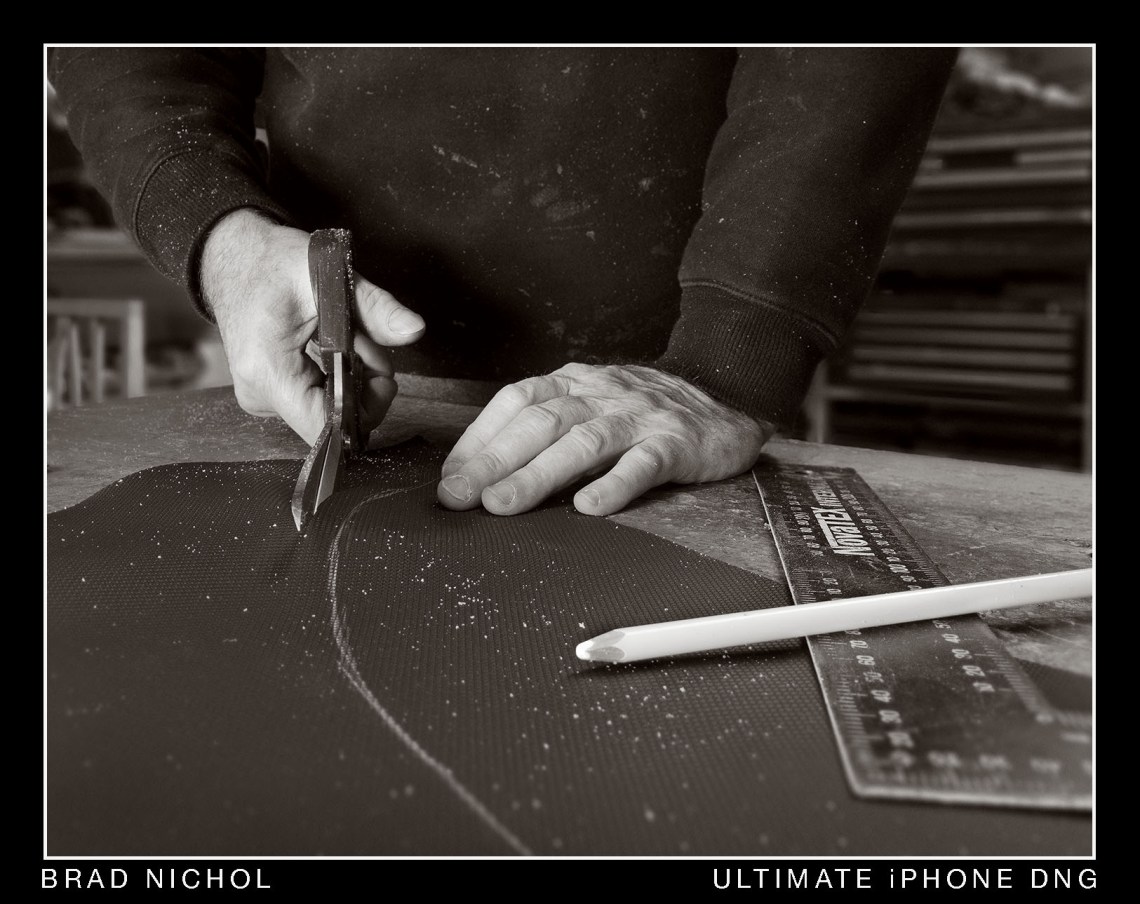

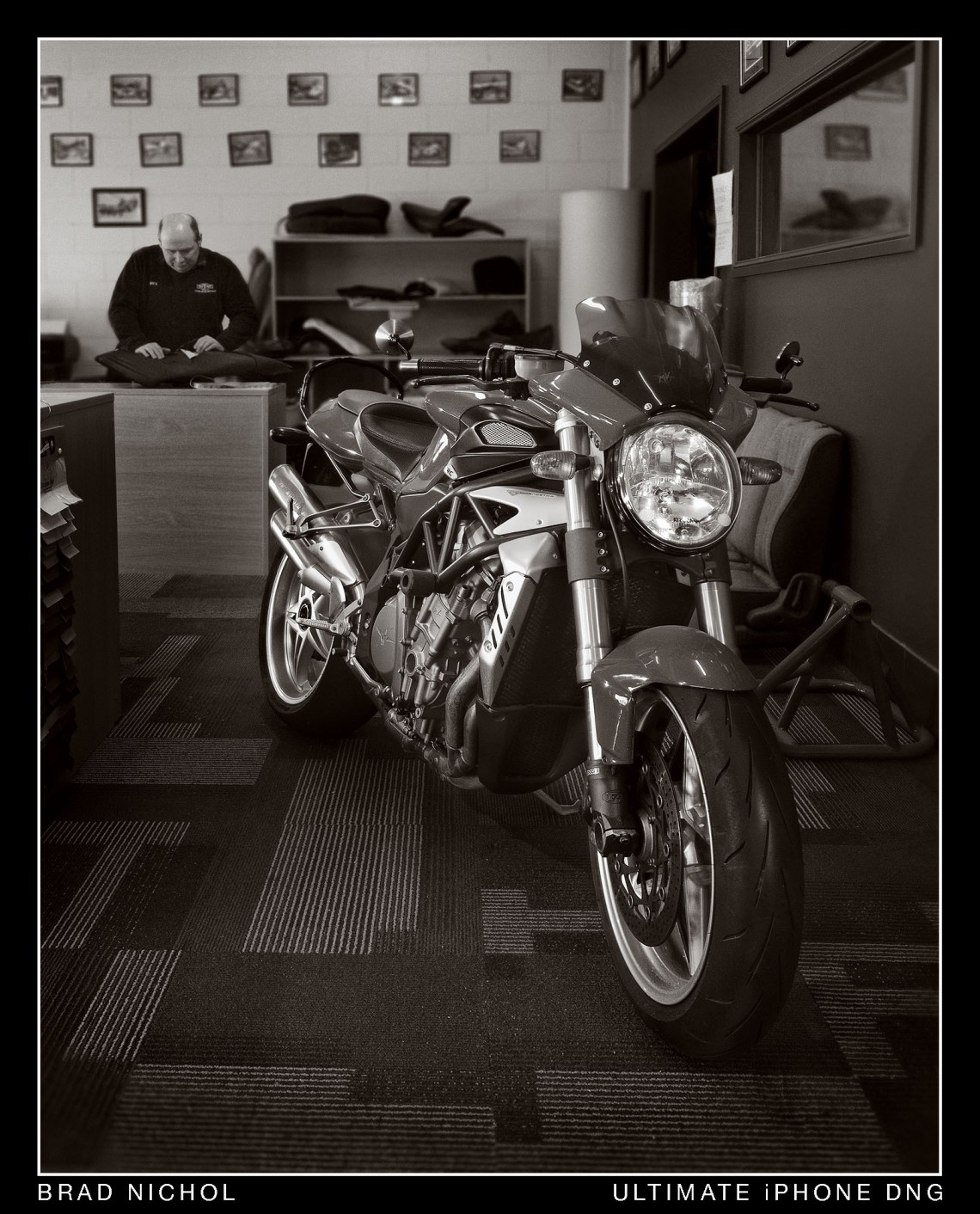

In short no, you can use the mode to capture objects other than heads and a lot of folks are doing just that, some even creating passable results for “product” photography needs.

The front facing camera can also be used to shoot objects but it seems more attuned to portraits and it’s very difficult to get it to work on static objects reliably.

The main limitation is the mode does not work if you go too close, which in the scheme of things is not very close at all, basically about a meter. I’ve found this close distance is quite variable, sometimes it will work a little closer up. You’ll know if it’s going to work before the shot cause it tells you right there on the screen “move further away”. This limit has nothing to do with the minimum focus distance for the telephoto lens which is around 35cm.

In theory, the mode should be able to work even better at close range as the difference between the positions of the two lenses should be better suited to more close up shooting distances than for further away, so I’m not really sure why the close distance limit is so far out.

The good news is the effect is seen as a live view, you know up front how the result will look, the bad news is the effect is often too strong, blurring parts of the objects you want to be left alone and when editing in the standard camera app you can only turn the effect on or off, there is no way to paint on the mask (which you do not see at all) to moderate the effect. (Remember there are Third party apps that can solve this limitation).

I’ve seen some pretty good photos of flowers, cameras, hands etc taken in the portrait mode so it’s definitely worth trying but presently portrait mode will probably prove frustrating when you want consistency, and that’s my main gripe, much of this probably comes down to the limits of the AI being currently used. I can accept that it might not be perfect, but I have not been able to work out exactly when it’s likely to succeed and fail, it’s just some damned random and the one thing I hate in photography is randomness of results, I like to know that if I do certain things I will get a predictable result. With more usage, I may become more intuitive with the option but I’m not convinced that’s how it will pan out, I suspect portrait mode is still a bit, shall we say, quirky for things other than portraits, which is no surprise.

So What are the main Issues?

Only works really well with faces.

Only works in the range of approximately 1 to 2.5 meters.

Blur is not adjustable. (with the Apple app)

There is a tendency to get blurred edges and fuzzed up fine details, especially hair.

Works best if the background is well behind the subject without any encroaching details from the sides.

The lighting effects are hit and miss and often far too strong.

Still not a replacement for your DSLR. (like that is a surprise)

You cannot zoom within the mode.

All of the above leads to the next question, is there anything we can do to make the results better and more predictable, or to put it another way, Brad, can you give me some killer tips.

I think I can, try these on for size.

Exposure!

Be very careful with the exposure, it is not a RAW file and doesn’t respond near as well to tonal adjustments “after the fact”. This especially applies to the highlights which once lost tend are impossible to recover and often end up with weird colour shifts.

Portraits live and breath by their ability to properly render lighter skin tones and texture without clipping, bleached white patches on the skin just look wrong. There are three things you need to attend to.

One, make sure you have the HDR option enabled, with IOS11 this is done within the “settings” menu, not within the app itself. Just make sure you have the Auto HDR option enabled.

Two, once you have focused slide your finger up and down on the screen to adjust the overall exposure, ensure that the skin tones you want to be recorded with detail actually are before you press the shutter. The newer iPhones are much better at controlling noise than previous models, a dark photo can be easily lightened in editing without turning into a noisy mess, and frankly, the pics often look more analogue when treated this way.

Last, if you are going to use the lighting effects, pay attention to how the effect is interacting with the exposure, it’s way too easy to overcook things at this point. Note: You can turn the effect off after the shot is taken so that’s not the problem, the real issue is that it makes it much harder to accurately judge the optimal exposure level.

My advice is to shoot without any lighting effects and then add the effect in post if you want it.

Colour?

Stupidly the iPhone still offers no way to control the white balance in the standard camera app, it’s not normally a drama as the iPhone is surprisingly good at setting an accurate auto WB most of the time, in fact far better than most DSLRs and Mirrorless cameras. But it can still mess up under odd lighting conditions or if the subject is dominated by one colour range, especially scenes with lots of yellow or red and sometimes blue in them.

There’s no easy workaround because the focus/exposure/white balance are all set with the same tap on point, though the camera does examine the whole image for WB. You could point it at a grey object then lock the AF/AE lock but that will also lock the focus so unless the subject and the grey object are at the same distance from the camera your subject may well end up not in focus.

Why has Apple failed to provide a white balance option, probably they just wanted to keep it simple but other brands of phone certainly don’t dumb things down this much for those with a desire for a bit of WB tweaking.

The answer would be to use another app that allows you to adjust the White Balance and still capture a depth map. In this case, you’d capture the portrait shot using the 2X camera with the depth map option turned on, the file would then be saved to the “Photos” app and edited as normal, where you can apply the Depth of Field and Lighting effects. The downside being you would not see the DOF effect when you take the photo, in that respect, it would be a bit like the Google Pixel 2 XL, where the image must be processed first.

Currently, there are few apps that can record a depth map, Halide is an example of one which can, but none I’ve tried allow you to adjust the WB when using the depth map option. I hope Halide addresses this soon as it would be an easy option for more serious shooters, better yet Apple how about you just give us a WB option…please.

Ultimately unless your WB is way off kilter it can be adjusted in post editing, if it looks like the colour is going to be beyond help then consider shooting against a less intensely coloured background and keep a close eye on the highlight exposure level.

Extending the Focus Range

Like I said the standard range is around 1 to 2.5 meters, this cannot be changed as the iPhone just switches automatically to regular shooting mode if the subject distance does not comply with the design parameters. However, if you use “Halide” with Depth Map enabled it seems to be able to extend the close focus mode down to around 40cm or so, maybe even a bit closer. If you go to close the editing in “Photos” seems to mess up the blurring and cause all manner of weird artefacts but it is useful for times when you want to shoot product photos.

Halide also seems to be able to get the depth option working at a greater distance than the standard camera app does, so it represents a nice addition to your iPhoneography toolset. Note, you cannot blur foreground objects when you focus on something more than say 2.5 meters out and generally the blur in front of the object is less convincing than the background blur.

Unfortunately, you can only control the exposure compensation when in Depth mode in Halide but that’s better than nothing.

Another option for creating blur effects it to transfer the HEIF files into Photoshop and then use it too create the blur using the depth map, that would need a whole extra article so I won’t go any further here.

Just in case anyone is wondering, and I know you were, you cannot yet shoot RAW in any Portrait mode, in other words, no depth map option for portrait mode because the depth map can only be recorded using the HEIF format. An option could be to record both RAW (DNG) and HEIF together, so far I haven’t come across this option, it would require more complicated post-processing however to implement.

Adjusting the Blur

Presently if you wish to adjust the degree of blur you will need to use another App such as the ones I mentioned earlier or Photoshop to process the image. You have options, but there is no way around the on/off choice if you stick with the regular app. Once you start using third-party apps you can apply any degree of blur you wish.

Blurry Edge Details

This one is tricky, as I explained it’s a combination of having a less than perfect depth map and the intelligence of the algorithms used to apply the blur effect.

One thing you can do is avoid shooting against very detailed or messy backgrounds, for example, Portraits taken against blue skies with a bit of cloud tend to work well as do portraits taken where there is a big distance between the foreground and background. It’s no accident that Apples’ sample shots tend to conform to this style.

The results also look better if there is a good level of subject/background contrast when the tones and colours in both are very similar the algorithms seem to get confused and randomly blur the wrong bits.

Another scene element type that causes grief is when you have foreground objects with cutouts in them which allow you to see through to the background elements, in this case often the areas inside the cutout are not blurred as you might expect, of course this is not an issues with human heads, heaven forbid we should have holes in our craniums but it does mess with product photography work. Obviously, it’s hard to avoid the issue but being aware and picking it up before you press the shutter button will at least give you a chance to rejig the shot before you shoot.

Better Lighting Effects.

Basically, they are all a bit too much for my delicate and easily offended eyeballs, but I do have a workaround. This might sound tricky but you can do it, so long as you have an editing App that can work with layers, I suggest Snapseed, which is both excellent and free.

Take the photo using the portrait mode without any lighting effects applied and then duplicate the image on the “photos” app. Open the first image and apply the lighting effect you want and leave the second one alone.

Now open the first in Snapseed and then using the “Double Exposure” tool open the second, you can now blend the two together as you see fit. You need to have a look at the tutorials for Snapseed to see how to do this, but rest assured the results can be pretty good.

Of course whilst you are in Snapseed you have a whole bunch of other terrific effects on offer to use, so double the joy for those with a sense of adventure. There are of course a wide array of apps that can work with layers, Snapseed just represents a nice easy option for those not deeply into editing.

Modify the Light

Want to really get great results for portraits, why not try using light modifiers just like we do with any other regular camera, reflectors, blockers, diffusers and artificial light sources all work with iPhones too you know.

Think about it, isn’t this what Apple is trying to synthesise with the Lighting FX anyway, well why not try the real thing!

Define Your focus.

I’ve noticed that most times when people use the portrait mode they don’t actually set focus, they just leave it up to the iPhone to decide, which is often perfectly Ok. But the blur effect can be significantly different depending on where exactly you set the focus and this can be seen on the screen when using the standard iPhone app.

Accurate focus setting is especially important for “product” style photography but also applies to portraits.

Try it, just move the focus point a bit and tap, you might be surprised to find the effect looks better, or perhaps worse.

Crop Away

No, you cannot use the zoom when in Portrait mode but you can certainly crop the image in post which can be helpful for product shots or when you want a more closely framed portrait. Chances are you will still end up with 6 to 8 megapixels in the cropped image which is more than enough for regular prints and way beyond what you need for most social media pics.

It’s not a DSLR

Well Doh Einstein, of course not.

No, you’re missing my point, we have all sorts of cameras and they are suited to some needs way better than others, I think many cameras get a bad rap when people try to use them for something they were not designed for in the first place.

See I get a little bit testy with camera reviewers saying things like iPhones are rubbish cameras because they can’t shoot wildlife, distant sports, studio portraits etc. Well sure they won’t excel at that, but then your DSLR makes a pretty crap pocket camera too.

It all boils down to this, is it sufficient for the job you have in mind or is it the wrong tool for the job, only you can decide, all I’m saying is don’t expect your iPhone to be something it isn’t, a DSLR replacement, work within its design parameters and I’m sure you’ll find it fine for the task.

Concluding.

I’m sure that we’re going to see massive development in the area of “portrait” mode on smartphones over the next few years, really the technology is just in its infancy and probably 5 years short of full market maturity.

I can see that over the next year or so we will have many apps coming to market that leverage off the depth Map option and possibly do a much better job than the standard iPhone app does.

In the meantime, it remains a great feature that handily expands the shooting envelope for most smartphone users and the results while not perfect, are superior to what you get by using the standard wide angle lens for portraits and product shots.

Next Up I will look I will look at the DNG files taken with the Tele lens on the X, I think you will be pleasantly surprised.